|

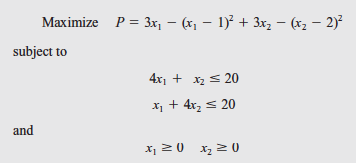

Make constraints and, which are violated, active. This example demonstrates how to determine the KKT point of a specific QP problem:Īssuming all constraints are satisfied, set the gradient equal to zero to attempt to find an optima. Figure generated using Wolfram Mathematica. Plot of the unconstrained objective function. 4 For all convex cases, an NLP solver in the optimization utility GAMS, such as KNITRO, MINOS, or CONOPT, can find solutions for quadratic programming problems.

When there is a range on the allowable values of (in the form, which is the case for image and signal processing applications, trust-region methods are most frequently used. If there are inequality constraints ( ), then the interior point and active set methods are the preferred solution methods. The typical solution technique when the objective function is strictly convex and there are only equality constraints is the conjugate gradient method. 7 More practical (constrained) formulations are more difficult to solve. Solutions can be tested for optimality using Karush-Kuhn-Tucker conditions just as is done for other nonlinear problems: 5īecause quadratic programming problems are a simple form of nonlinear problem, they can be solved in the same manner as other non-linear programming problems.Īn unconstrained quadratic programming problem is most straightforward to solve: simply set the derivative (gradient) of the objective function equal to zero and solve. 6 This is a sufficient condition, meaning that it is not required to be true in order for a local minimum to be the unique global minimum, but will guarantee this property holds if true. To analyze the function’s convexity, one can compute its Hessian matrix and verify that all eigenvalues are positive, or, equivalently, one can verify that the matrix Q is positive definite. If the objective function is convex, then any local minimum found is also the sole global minimum. When there are only inequality constraints ( ), the Lagrangean is: 6 6 The one-half in front of the quadratic term is included to remove the coefficient (2) that results from taking the derivative of a second-order polynomial.Īs for the constraints, the matrix equation contains all of the linear equality constraints, and are the linear inequality constraints.

By convention, any constants contained in the objective function are left out of the general formulation. Put more simply, is the Hessian matrix of the objective function and is its gradient. The objective function is arranged such that the vector contains all of the (singly-differentiated) linear terms and contains all of the (twice-differentiated) quadratic terms. 3,4 The problem was first explored in the early 1950s, most notably by Princeton University's Wolfe and Frank, who developed its theoretical background, 1 and by Markowitz, who applied it to portfolio optimization, a subfield of finance.Ī general quadratic programming formulation contains a quadratic objective function and linear equality and inequality constraints: 2,5,6 QP is widely used in image and signal processing, to optimize financial portfolios, to perform the least-squares method of regression, to control scheduling in chemical plants, and in sequential quadratic programming, a technique for solving more complex non-linear programming problems. 1 The objective function can contain bilinear or up to second order polynomial terms, 2 and the constraints are linear and can be both equalities and inequalities. Quadratic programming (QP) is the problem of optimizing a quadratic objective function and is one of the simplests form of non-linear programming.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed